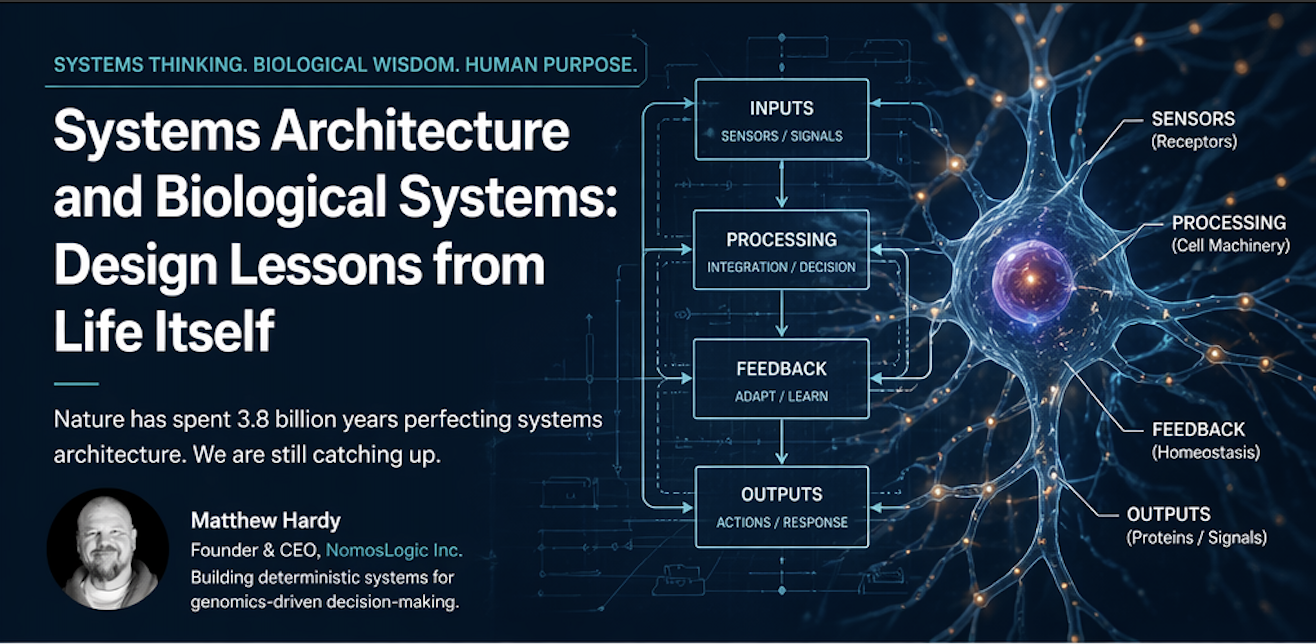

How NomosLogic Turns Clinical Chaos into Real‑Time Decisions

In the era of “Big Data,” healthcare is still strangely small.

Most clinical data lives in the wrong shape: unsearchable PDFs, faxed consult notes, proprietary lab portals, and genetic reports that never touch the EHR. Providers know the signal is there—but it’s locked inside formats that humans can read and computers can’t.

At NomosLogic, we learned that the first step to real longevity medicine isn’t more data.

It’s better ingestion and fusion.

From Manual Entry to Multi‑Omic Ingestion

For years, most “health apps” asked patients to manually type in their lab results:

“What was your last HbA1c?”

“Enter your LDL.”

“Upload a PDF and we’ll…do our best.”

This is slow, error‑prone, and fundamentally misses the point. A human typing numbers into a phone is not a data pipeline.

NomosLogic’s platform starts by treating every lab report, PDF, and data feed as a computable object, not just a document. Our ingestion stack does three things in seconds:

Reads the report like a map

We use computer vision and layout‑aware parsing to identify:

The biomarker (e.g., HbA1c, creatinine, eGFR)

The value (e.g., 5.1, 1.36, 47)

The unit (%, mg/dL, mL/min/1.73m²)

The applicable reference ranges and flags

Normalizes it across sources

Quest, LabCorp, and a local hospital can all report Vitamin D differently:

ng/mL vs nmol/L

Slightly different reference ranges

Different codes or even free‑text labels

Our Normalization Layer standardizes everything into a unified schema backed by LOINC, SNOMED CT, and other clinical vocabularies so one longitudinal record survives across providers, time, and geography.

Turns it into FHIR‑native, EHR‑ready data

Every result is rendered as FHIR R4‑compliant resources that can be written back into the EHR or into research environments without fragile glue code.

That was our starting point.

But labs alone don’t explain why kidneys fail, why statins hurt one patient and not another, or why a standard drug dose becomes a toxic dose.

To answer those questions, ingestion has to go beyond PDFs.

From Normalization to Fusion: DNA, Drugs, and Labs

The original vision behind our Normalization Layer was simple: make labs from any source look and behave the same. Today, that same philosophy powers something much larger: multi‑omic clinical decision support.

NomosLogic’s platform now ingests:

Consumer genotyping arrays (~668,000+ genotypes from ancestry files)

Clinical‑grade genomes (GVCF with quality scores)

Real‑time laboratory data (chemistry, hematology, lipids, urinalysis, etc.)

Medication and problem lists, where available

Under the hood, several engines work together:

Hardy Bridge: a common language for genomics

Our nomenclature layer (Hardy Bridge) recursively links:

Star alleles (e.g., CYP2C9*3)

rsIDs (e.g., rs1057910)

HGVS notation

It prevents the classic “right gene, wrong notation” problem that silently breaks many genomics pipelines.

TRINITY: lab + genome fusion

Our TRINITY engine cross‑references:

The patient’s variants

The patient’s current biomarkers

A curated vault of millions of clinical and pharmacogenomic associations

Instead of returning a flat list of variants, TRINITY surfaces mechanistic stories:

“This kidney is structurally fragile because of collagen and filtration barrier variants.”

“This NSAID is unsafe because CYP2C9 poor metabolizer status pushes drug levels into a toxic range—especially at the patient’s current eGFR.”

“This statin at this dose in this genotype is a bad idea; here are safer alternatives.”

Longitudinal structure by design

Everything is written into a stable schema that can track:

eGFR, creatinine, and BUN over years

Drug exposures against genotype over a lifetime

Safety alerts that should never be lost (e.g., malignant hyperthermia, DPYD toxicity, HLA‑B–linked SCARs)

But even with all of this, there was a subtle, critical blind spot: population frequency.

Why Frequency Matters — And Why “Global Averages” Are Dangerous

The clinical significance of a variant depends not just on what it does, but how common it is where this patient comes from.

Historically, most pipelines—including our early versions—used a single global frequency for each variant, pulled from GWAS catalogs. That meant:

A variant common in one population and rare in another was treated as if it had the same weight for everyone.

Rare but important alleles could be “smoothed out” by large global averages.

We needed a way to make our scoring ancestry‑aware without turning the product into a statistical methods paper.

That’s where Ancestry‑Aware Frequency Resonance comes in.

Ancestry‑Aware Frequency Resonance (AAFR)

At the core of our quantum scoring model, each variant sits behind a “barrier” that encodes how hard it is for that variant to matter clinically.

NIH All of Us Research Program (245,000+ whole genomes)

UK Biobank (200,000+ deeply phenotyped participants)

The same variant “rings louder” (gets amplified) when it is genuinely rare in the patient’s ancestral population, and “rings softer” when it’s common and well‑tolerated there.

This is Ancestry‑Aware Frequency Resonance (AAFR): calibrating the “loudness” of a variant to the background frequency of the population the patient actually belongs to.

Concrete Example: CYP2C19*17 and Why Ancestry Changes the Story

Consider the pharmacogene CYP2C19, which affects response to drugs like clopidogrel and certain antidepressants. The 17 allele (CYP2C1917) tends to make people rapid metabolizers.

Using ancestry‑aware frequency resonance, we look up haplotype frequencies from All of Us and UK Biobank:

Patient Ancestry

Frequency of CYP2C19 *17 ((f_\text{population}))

Impact in Our Model

African (afr) 0.2213 (about 22%) Moderately amplified

European (eur) 0.2425 (about 24%) Similar amplification

East Asian (eas) 0.0058 (about 0.6%) Strongly amplified

Now imagine two patients with the same genotype (*1/*17):

Patient A: African ancestry

Patient B: East Asian ancestry

Under a global average model (everyone gets (f approx 0.21)):

Both patients would receive essentially the same risk score for CYP2C19*17.

The East Asian patient’s truly rare allele would be underestimated.

Under Ancestry‑Aware Frequency Resonance:

Patient A (African, where *17 is ~22%): the allele is relatively common; the model treats it as an important but not extraordinary finding.

Patient B (East Asian, where *17 is ~0.6%): the allele is genuinely rare; the effective barrier height (V_eff) is ~2.5× higher than in Patient A, amplifying its clinical priority.

Clinically, that means:

For Patient B, we are much less comfortable ignoring this “rare fast‑metabolizer” pattern when choosing drugs and doses.

For Patient A, we still respect the variant, but we recognize that many people in that population carry it without catastrophic outcomes; the “background” is different.

Same gene. Same allele. Same nominal genotype.

Very different story once ancestry enters the equation.

How AAFR Shows Up in Real Clinical Workflows

AAFR isn’t a theoretical add‑on; it’s wired into the product experience:

Dendrite Lite (consumer) asks users to select a biogeographic group (e.g., African/African American, East Asian, European, South Asian, etc.).

Dendrite Standalone (practitioner) does the same for patients, and can auto‑populate from EHR race/ethnicity fields via SMART on FHIR.

TRINITY passes this ancestry flag into our VELOX engine, which:

Retrieves ancestry‑stratified haplotype frequencies for key pharmacogenes.

Falls back to global frequencies only when ancestry isn’t provided or the data fails quality checks.

On top of that, phenotype frequencies from All of Us let us report context in plain language, for example:

“Your CYP2C19 genotype (*1/*17) maps to a Rapid Metabolizer status.

This phenotype is observed in about 12% of European populations and around 2–3% of African populations (All of Us, n=245K).”

This is what it means for an algorithm to be patient‑specific instead of merely “genotype‑specific.”

A Real Case: Idiopathic Kidney Decline Meets Ancestry‑Aware Genomics

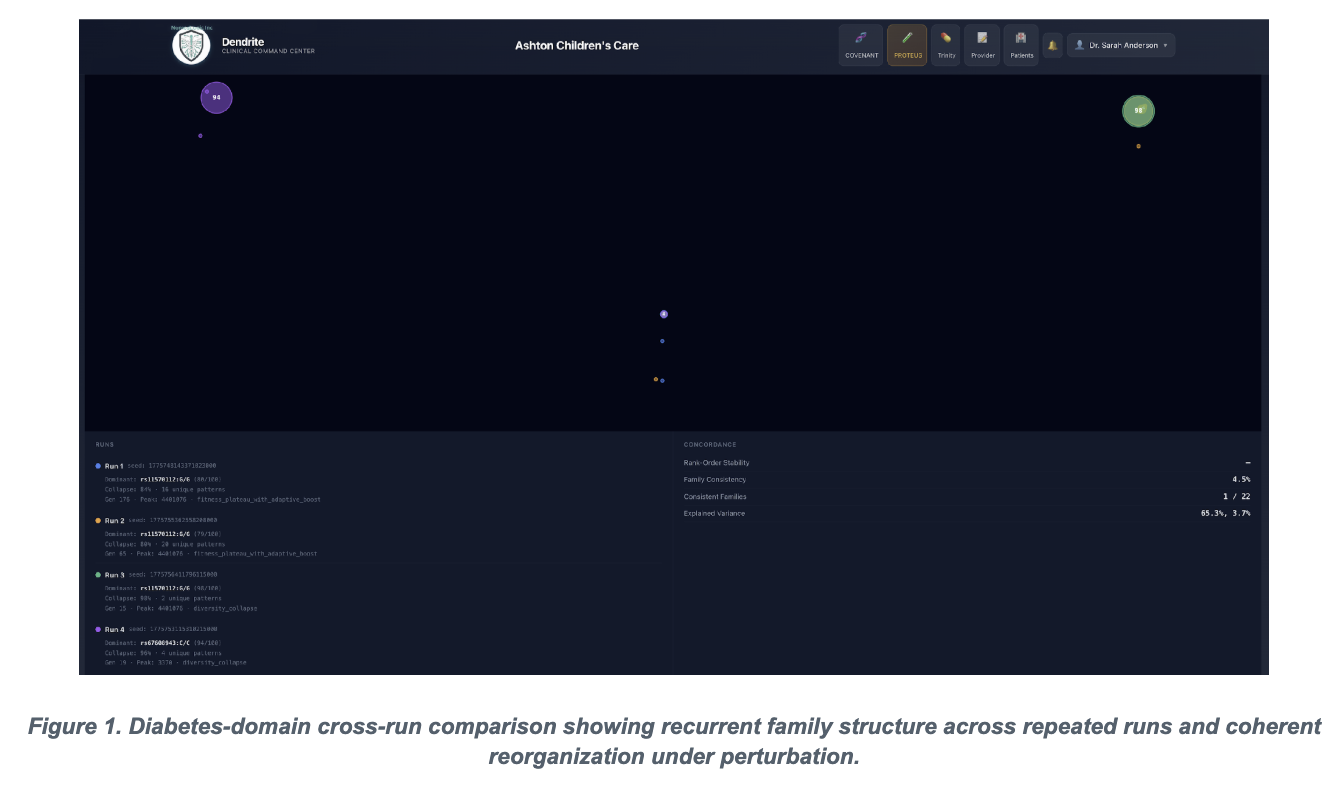

In early 2026, we prospectively tested the platform on a patient with Stage 3a chronic kidney disease:

eGFR: 47 mL/min/1.73m²

Normal BMI, normal blood pressure, normal A1c

No obvious cause on conventional workup; charted as “idiopathic”

NomosLogic’s TRINITY engine ingested:

668,893 genotypes from a consumer ancestry file

Concurrent labs (metabolic panel, lipids, urinalysis)

The patient’s self‑identified ancestry, which drove AAFR

In about 130 seconds, the platform surfaced a multi‑omic hypothesis:

Structural vulnerability

Variants in collagen IV and nephrin consistent with a filtration barrier that is mechanically fragile in this patient’s ancestral population.Chemical trigger

A poor‑metabolizer genotype in CYP2C9, combined with the patient’s current NSAID use and eGFR, pointed to chronic intrinsic nephrotoxicity.Ancestry‑aware weighting

Several alleles the global literature treats as “background” were, in this patient’s ancestry, genuinely rare and destabilizing. AAFR amplified these in the scoring so they weren’t lost in the noise.

The same run produced a lifetime pharmacogenomic safety map (statins, fluoropyrimidines, anesthesia risk, etc.), with ancestry‑aware prevalence estimates that helped the clinician prioritize what to act on now versus what to document for life.

The nephrologist ordered confirmatory genetics; results are pending. The goal isn’t to replace that step—it’s to make sure those confirmatory tests are targeted by a mechanistic, ancestry‑aware hypothesis instead of guesswork.

Why Speed, Structure, and Ancestry Are the Real Moat

Healthcare is full of beautiful PDFs that arrive too late and ignore who the patient actually is.

Legacy genomics and pharmacogenomics workflows live on 7–21 day turnaround times and “one size fits all” frequency assumptions. By the time results are back, the drug is already prescribed, the contrast study already done, the chemo already hung—and the underlying math assumed every patient lives in the same global population.

NomosLogic’s thesis:

Speed without interpretability is noise.

Interpretability without speed is useless.

And interpretability that ignores ancestry is incomplete.

By automating:

Reading unstructured reports

Normalizing units, codes, and genomic nomenclature

Applying Ancestry‑Aware Frequency Resonance to calibrate variant significance to the patient’s true background

Fusing DNA + labs + guidelines into a single, standards‑coded record

…we remove the grunt work that slows clinicians and clogs AI pipelines, and we correct the “global average” bias that quietly distorts genomic risk.

What’s left is the part humans (and advanced models) should be doing:

Intervention.

Choosing the right drug, dose, and route for this patient, in this body, from this ancestry.

Documenting the lifetime alerts that matter and will remain true decades from now.

Moving from “we don’t know why this is happening” to “here’s a mechanism we can test and act on.”

That, more than any single algorithmic trick, is the integrity of our moat:

a platform that can take the messy, fragmented, and population‑biased reality of today’s clinical data and turn it into real‑time, ancestry‑aware, multi‑omic decisions—without asking anyone to retype a single lab value.