Why Deterministic Systems Win Long Term

By Matt Hardy — CEO, NomosLogic Inc · Engineering Leadership and Strategy · Systems Architecture · Consumer Infrastructure · 30 Years Building at Depth

I spent the early part of my career writing code that had to be exactly right.

Kernel-level code doesn't negotiate. There's no graceful degradation at the OS level, no "close enough" when you're managing memory at the boundary between hardware and software. Either the logic is correct and the system holds, or it isn't and it doesn't. That's the whole equation.

That discipline shaped how I think about every system I've built since. And the longer I've been in this industry, the more convinced I've become that it applies far beyond kernel engineering — and that most modern software development has drifted dangerously far from it.

What I mean by approximation

Approximation in software shows up in a lot of forms, and not all of them are obvious.

The most visible kind is the probabilistic output — the model that's right 94% of the time, the recommendation engine that optimizes for engagement rather than accuracy, the fraud score that flags "likely" without committing to "yes." These systems are genuinely useful. I'm not arguing against them. But they carry a cost that often gets underweighted: you can't fully trust their output without understanding their failure modes, and most systems built on top of them don't.

The less visible kind is architectural approximation. The system that was designed to handle "most" cases and quietly passes edge cases to a manual review queue that nobody monitors. The data pipeline that's "probably" idempotent. The integration that works "as long as the upstream system behaves normally." These aren't probabilistic by design — they're approximate by neglect. Someone decided not to solve the hard case and moved on.

Both kinds compound. And both kinds become much more expensive to unwind than they were to avoid.

Why determinism is having a moment — and why it matters

There's an interesting tension in the current technology landscape.

On one side, the AI wave has accelerated the adoption of probabilistic systems at a scale we've never seen before. Language models, recommendation engines, classification systems — all of them are fundamentally approximate. They're also genuinely powerful, and the industry is right to be excited about what they make possible.

On the other side, the domains where these systems are being deployed are getting more consequential. Healthcare. Financial infrastructure. Legal reasoning. Identity. These are domains where "usually correct" is not an acceptable operating standard — where the cost of the wrong answer in the wrong case is not a degraded user experience but something much harder to recover from.

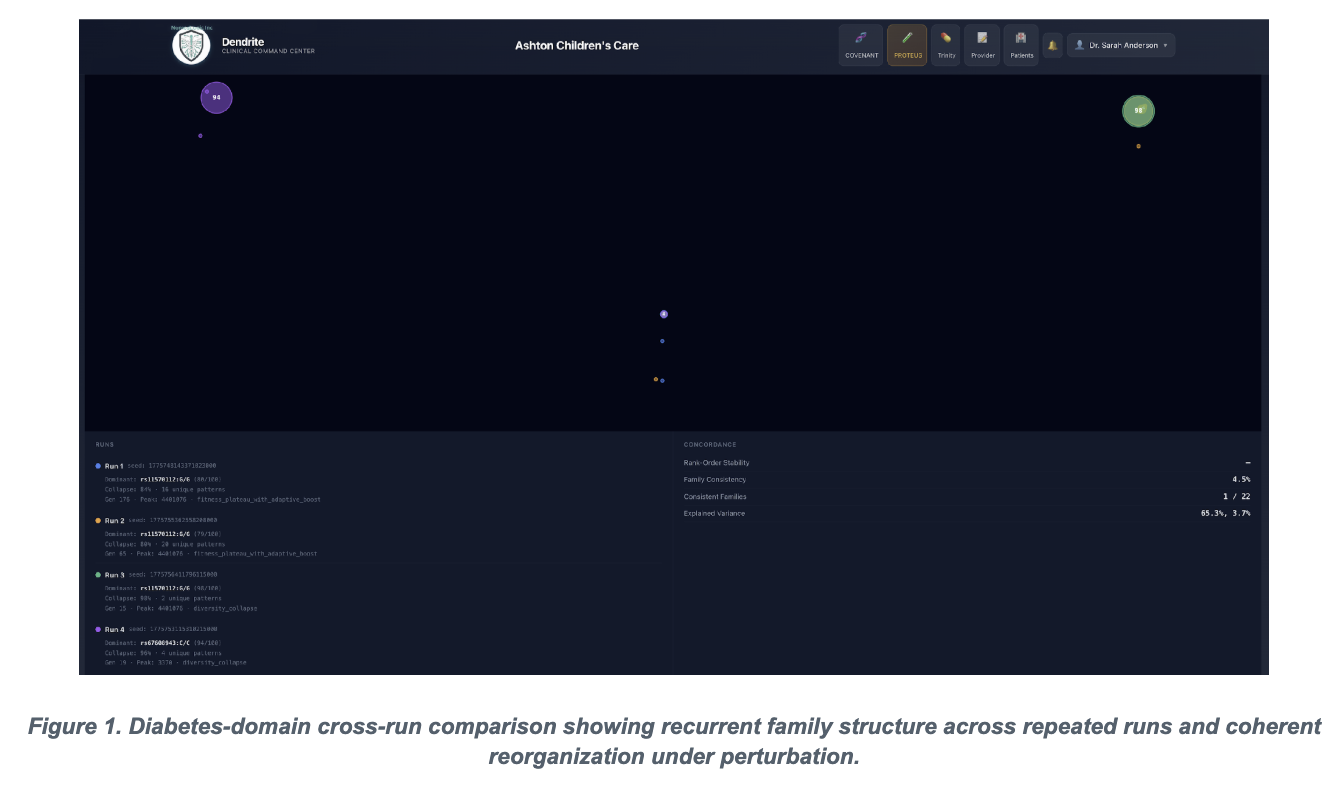

The systems that will win in those domains long term are not the ones with the most impressive benchmark scores. They're the ones that can tell you exactly why they produced a given output, trace that output to a specific chain of logic, and guarantee that the same input will produce the same output every time.

That's determinism. And it's not in tension with AI — it's the infrastructure that makes AI trustworthy enough to deploy in places that matter.

What I've learned building both kinds of systems

I've worked on systems that were approximate by design and systems that were deterministic by requirement. The engineering experience is genuinely different.

Approximate systems are faster to build initially. You can move quickly, iterate on outputs, tune the model. The feedback loop is short and the early results are often impressive. The problems show up later — in production edge cases, in regulatory scrutiny, in the moment when someone asks "why did the system do that" and there's no clean answer.

Deterministic systems are harder to build. You have to define the logic completely before you can trust the output. You have to handle the cases you'd rather not think about. You have to be honest about what the system knows and what it doesn't, and build that distinction into the architecture explicitly. There's no hiding behind "the model decided."

But deterministic systems age better. They're auditable. They're explainable. They scale without accumulating the kind of invisible complexity that eventually collapses approximate systems. And in regulated or high-stakes environments, they're often the only systems that can actually be deployed.

The leadership dimension

This is a strategic choice, not just a technical one — and I think it deserves more boardroom attention than it gets.

When an organization decides to build on approximate foundations, it's making a bet that the domains it operates in will continue to tolerate approximation. That regulators won't ask hard questions. That customers won't demand explainability. That the edge cases will stay edge cases.

That bet is getting harder to make with confidence. Explainability requirements are tightening across healthcare, finance, and AI governance broadly. Customers are becoming more sophisticated about what they're agreeing to when they interact with automated systems. And the organizations that built their core infrastructure on probabilistic foundations are going to face an expensive reckoning when the standard shifts.

The organizations building deterministic infrastructure now — even when it's harder, even when the approximate solution would ship faster — are making a different bet. That correctness compounds. That trust is a moat. That the ability to say "here is exactly why the system produced this output" will be worth more in five years than it is today.

I think they're right.

Approximation has its place

I want to be precise here, because I'm not arguing for a world without probabilistic systems.

Approximation is the right tool for a large category of problems. Recommendation. Discovery. Pattern recognition at scale. Anywhere the cost of an individual wrong answer is low and the value of getting most answers right is high — these are legitimate domains for probabilistic approaches, and the technology is genuinely impressive.

What I'm arguing against is the undifferentiated application of approximate methods to problems that require deterministic ones. And the organizational habit of reaching for the probabilistic tool first because it ships faster, without asking whether the problem actually tolerates approximation.

The question I ask before any significant architectural decision is simple: what is the cost of being wrong in this system, and does our architecture reflect that cost accurately?

If the answer is that being wrong is cheap and recoverable — approximate away. If the answer is that being wrong has consequences that are hard to undo — build it right the first time.

Thirty years in, I still find deterministic systems more interesting than approximate ones. Not because they're easier — they're not. But because there's a kind of intellectual honesty in a system that has to be exactly right. It forces clarity about what you actually know, what you're actually guaranteeing, and where the real limits of the system are.

In an era of increasingly powerful approximation engines, that clarity is becoming rarer. Which means it's becoming more valuable.

Matt Hardy is CEO, NomosLogic Inc, with 30 years of experience in systems architecture, kernel engineering, and enterprise infrastructure.