Medicine Needs Governable Systems, Not Just Intelligent Ones

The most important divide emerging in medicine is not human versus machine.

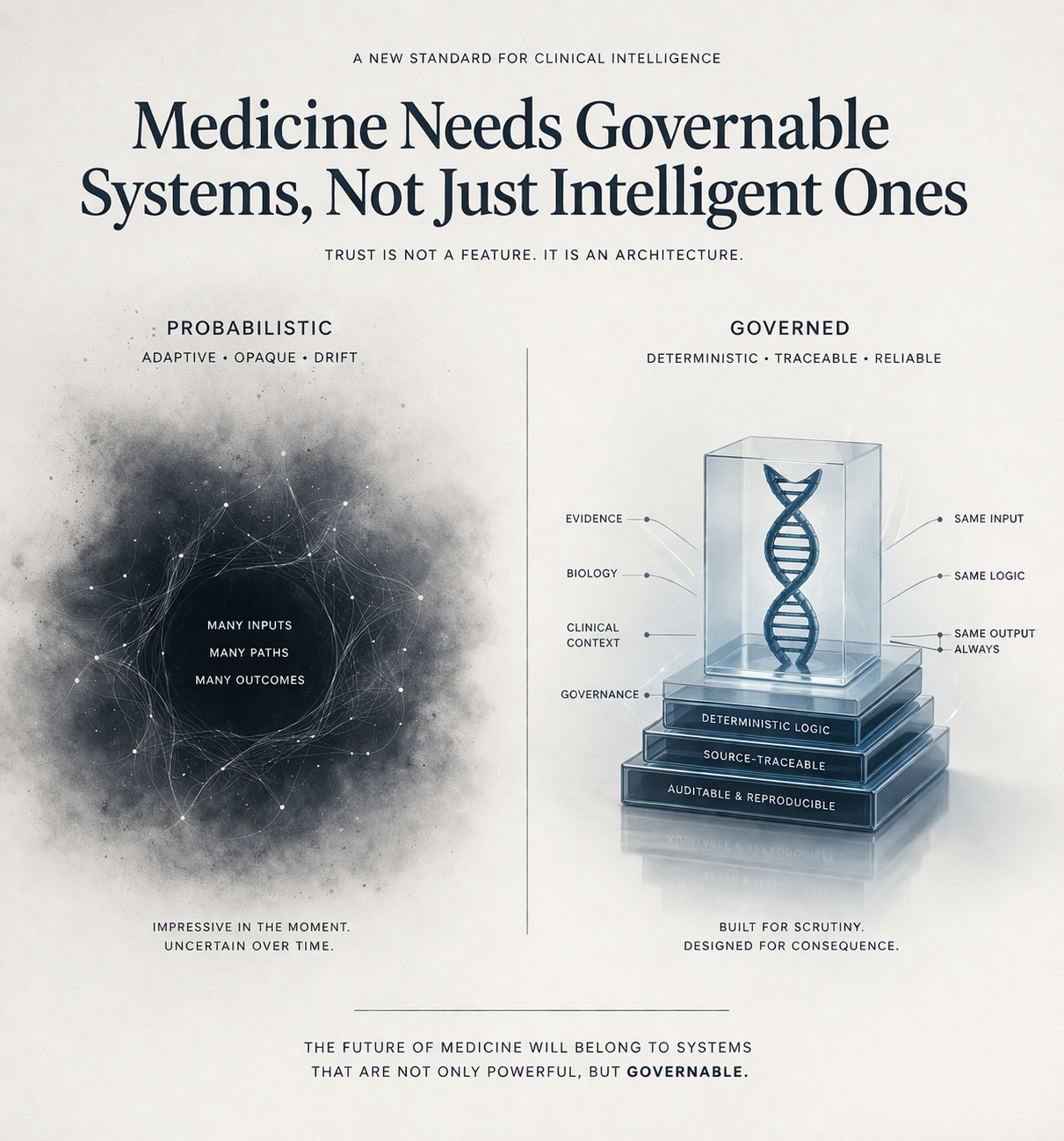

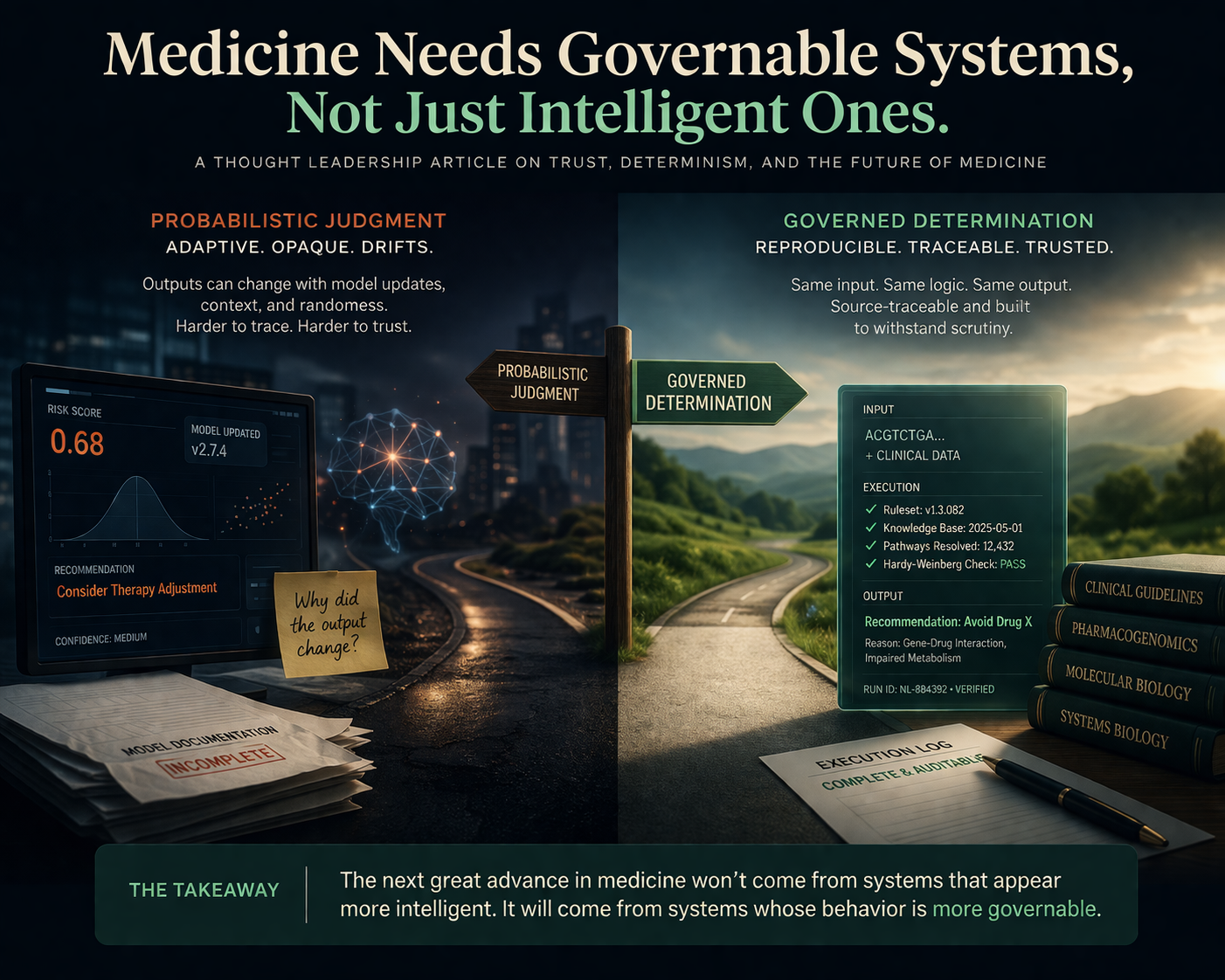

It is probabilistic judgment versus governed determination.

That distinction is being obscured by the current language of healthcare technology, where almost everything is described as intelligence, assistance, automation, or augmentation. Those words blur a boundary that should remain extremely clear: there is a profound difference between a system that helps people navigate information and a system that participates in clinical judgment.

The interface layer can tolerate ambiguity. In some cases, it can even benefit from it. Language can be adaptive. Summaries can be probabilistic. Search can be exploratory. Human conversation itself is full of approximation, implication, and revision.

But the clinical layer is different.

At the point where a system contributes to medication guidance, molecular interpretation, or disease understanding, ambiguity is no longer a neutral property. It becomes a governance problem. If the same input can produce materially different outputs over time, then trust is no longer anchored in evidence alone. It is anchored in a shifting relationship between model state, unseen updates, contextual variation, and post hoc explanation.

That is not a stable foundation for medicine.

For years, healthcare has treated the main bottleneck as data scarcity. That has been comforting because it suggests a familiar solution: collect more, compute more, model more, infer more. But in genomics especially, that framing is increasingly inadequate. The data already exists in enormous quantity. What remains missing is the layer that can transform that data into reproducible, clinically usable structure.

That is an infrastructure problem, not an intelligence problem.

And infrastructure has a different standard.

Infrastructure must be boring in the most important way. It must be auditable, repeatable, legible, and durable under stress. It must behave correctly not only when conditions are ideal, but when scrutiny increases, when edge cases appear, and when responsibility becomes real. It must survive not just curiosity, but consequence.

This is why so much of the current discourse around AI in medicine misses the deeper issue. The question is not whether models are impressive. The question is whether the architecture beneath clinical use is governable.

Can it be traced?

Can it be reproduced?

Can it be challenged?

Can it be bounded?

Can it be trusted not only when it is helpful, but when it is wrong?

Those are infrastructure questions.

They are also moral questions, because medicine is one of the few domains where epistemology and consequence collide directly. A mistake is not merely a defect in output. It becomes a lived event in a human body.

That is why medicine should be extremely careful about normalizing systems whose core behavior is probabilistic while their public presentation implies stability. The danger is not only error. It is misplaced confidence. It is the quiet migration of authority from governed logic to adaptive inference before we have built the institutional language to describe that shift honestly.

This does not mean computational systems should be excluded from medicine. Quite the opposite. It means we should be much more precise about where different kinds of systems belong.

There is a legitimate place for adaptive systems at the experience layer: explanation, translation, interaction, navigation, communication.

But the clinical core should be held to a different standard.

Not because medicine is old-fashioned. Because medicine is accountable.

And accountability requires more than performance. It requires determinacy where determinacy is possible, and explicit boundaries where it is not.

The next great advance in medicine may not come from building systems that appear more intelligent.

It may come from building systems whose behavior is more governable.

That is a quieter ambition. Less theatrical. Less fashionable.

But it may prove to be the more important one.