Intro

There’s a growing conversation happening around AI in healthcare—how it should be used, how it should be regulated, and what standards it should be held to.

This week, we submitted a comment to the FDA as part of that process.

Not because we have all the answers.

But because we’re building in this space—and we believe it’s important to help shape what “safe and effective” actually means in practice.

The Current Conversation

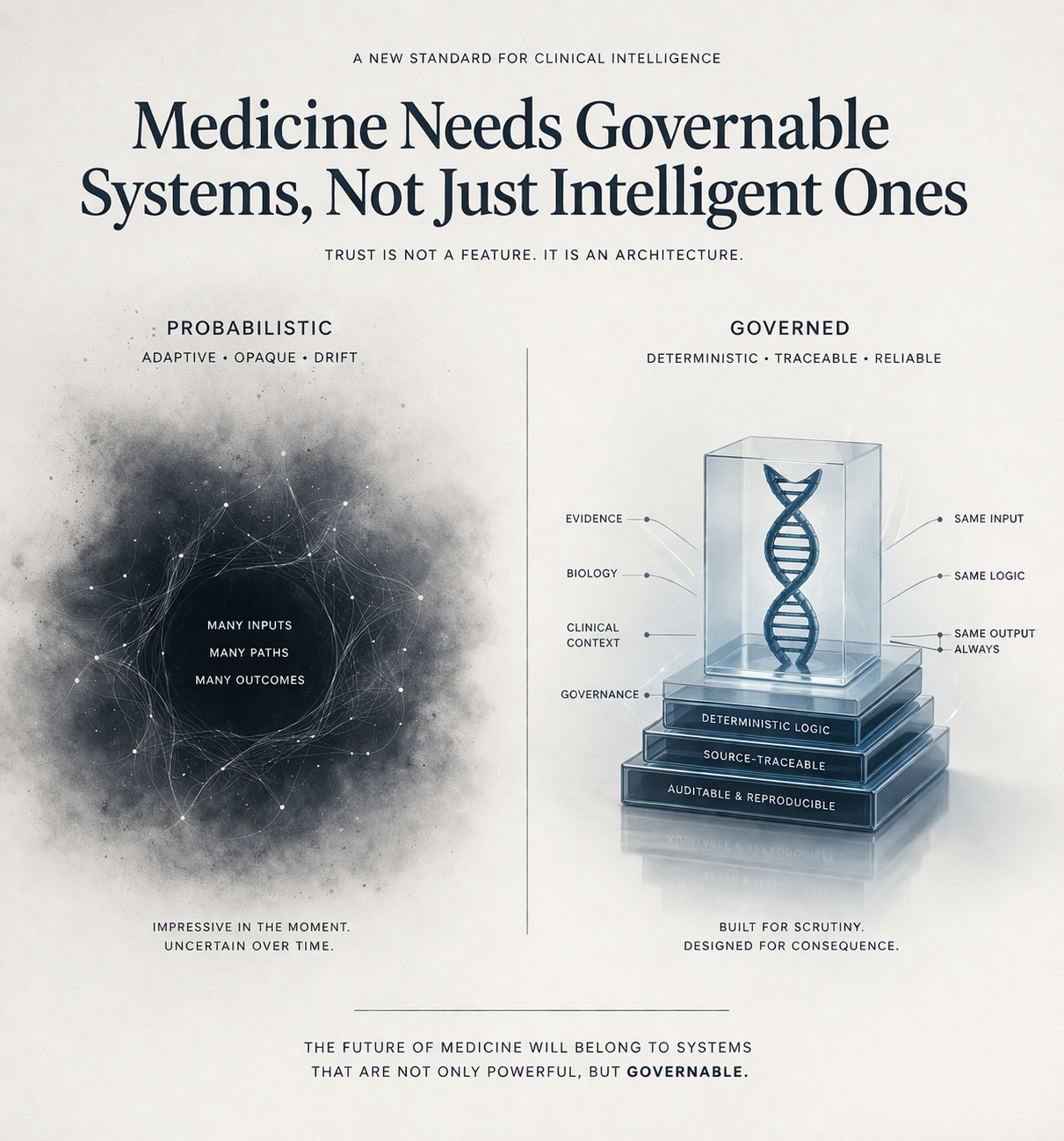

A lot of the discussion around AI in healthcare today focuses on:

model performance

access to data

scalability

These are important.

But they are not the core problem.

In clinical settings, the question is not:

Can a system generate an answer?

It’s:

Can that answer be trusted, understood, and defended?

Where Systems Break Down

From an engineering perspective, many systems fail in predictable ways:

Outputs cannot be traced back to evidence

Results cannot be reproduced consistently

Confidence is presented without clarity

Models operate as black boxes in high-stakes environments

These issues are manageable in consumer applications.

They are not acceptable in clinical decision-making.

What We Believe Matters

In our FDA comment, we focused on a few principles that we believe are foundational:

1. Traceability

Every output that influences a clinical decision should be explainable in terms of underlying biological or clinical evidence.

2. Reproducibility

Given the same inputs, a system should produce consistent, stable outputs.

3. Evidence Separation

Systems must clearly distinguish between:

established knowledge

supported inference

and uncertainty

4. Conservative Failure Modes

When evidence is limited or conflicting, systems should default toward caution—not confidence.

Why This Matters Now

AI capability is advancing rapidly.

But clinical systems don’t fail because they lack capability.

They fail when:

outputs cannot be validated

decisions cannot be defended

or trust breaks down between patient and physician

If we don’t get the standards right now, we risk building systems that scale quickly—but fail when it matters most.

A Different Approach

At NomosLogic, we’ve taken a different path.

Instead of optimizing for probabilistic output generation, we’ve focused on:

deterministic logic

structured biological relationships

and systems that prioritize interpretability and validation

Not because it’s easier.

But because it aligns more closely with how clinical decisions are actually made.

The Role of Industry

Regulation should not be something that happens to companies.

It should be informed by the people building and using these systems.

That includes:

clinicians

researchers

engineers

and patients

Submitting a comment is a small part of that.

But it’s part of participating in a broader responsibility.

Closing

Healthcare doesn’t need more impressive demos.

It needs systems that can be trusted in real clinical environments.

That means:

clear standards

defensible outputs

and a shared understanding of what “safe” actually looks like

We’re committed to building toward that standard—and contributing where we can to help define it.

Read the full comment

Opportunity for Public Comment on Rare Disease Educational Materials from the Center for Drug Evaluation and Research’s Accelerating Rare disease Cures Program and the Rare Disease Innovation Hub